バック

Vectra AIプラットフォーム

リスクを低減し、攻撃を阻止し、セキュリティ態勢を強化するAI駆動型プラットフォーム

Vectra AI vs その他のすべて

比較ガイド

セキュリティ運用のユースケース

最新のアタックハブ

産業

背面/プラットフォーム

セキュリティ運用のユースケース

すべてのユースケースを見る

すべてのセキュリティオペレーションセンター(SOC)は、同じジレンマに直面しています。組織を保護するために設計されたツールが、アナリストをノイズの洪水に溺れさせているのです。現在、組織は1日平均2,992件のセキュリティアラートを受信していますが、そのうち63%は未対応のままとなっています(Vectra AI )。フラグが立てられた事象と実際に調査される事象との間のこのギャップこそが、セキュリティ侵害の始まりなのです。 「2025年SANS検知・対応調査」によると、セキュリティチームの73%が、検知における最大の課題として誤検知を挙げています。一方、組織の76%は、アラート疲労をSOCの主要な懸念事項として挙げています(Cybersecurity Insiders 2025)。本ガイドでは、アラート疲労とは何か、その測定方法、コンプライアンスとの関連性、そして解決に向けた段階的なロードマップについて解説します。

アラート疲労とは、 SOC運用 アナリストが、圧倒的で持続的なセキュリティアラートの量に直面した際に経験する感覚の鈍化であり、その結果、真の脅威を見逃したり、対応が遅れたり、無視したりすることにつながります。これにより、 脅威検知の の質を低下させ、組織のリスクを高めます。

この概念は医療分野に端を発する。医療現場では、医療機器のアラーム音が絶え間なく鳴り響くことに臨床スタッフが感覚を麻痺させてしまう――この現象は「アラーム疲労」として知られている。2010年代から2020年代にかけて、SIEMの導入や検知ツールの乱立が加速するにつれ、サイバーセキュリティ分野でもこの用語が採用されるようになった。両分野における心理的メカニズムは全く同じである。つまり、通知の量が人間の処理能力を超えると、人はすべての通知――重要なものも含めて――に反応しなくなってしまうのだ。

この問題の深刻さは、すでに多くの報告で明らかになっています。組織が1日に受け取るセキュリティアラートの平均数は2,992件(Vectra AI )であり、2025年の3,832件、2023年の4,484件から減少しています。しかし、件数の減少だけでは問題は解決していません。 これらのアラートの63%は依然として未対応のままであり、76%の組織がアラート疲労をSOC(セキュリティオペレーションセンター)の最大の課題として挙げています(Cybersecurity Insiders 2025)。単にアラートの数を減らすだけでは解決策にはなりません。重要なのはシグナルの質です。

アラート疲労は、臨床現場、すなわち患者モニターからの絶え間ないビープ音によって看護スタッフが重大な警告に鈍感になってしまった病院で生まれた現象です。アラート疲労とは、この現象をサイバーセキュリティの文脈に適用したもので、SOC環境におけるセキュリティ監視アラートに当てはまります。両者には共通の根本的なメカニズムがあります。それは、通知量が過剰になることで鈍感化が生じ、重大なシグナルを見逃してしまうという点です。本ページでは、サイバーセキュリティの文脈に限定して解説します。

アラート疲労は、誤検知、ツールの乱立、手動による優先順位付け、アラート量の増加、そして断片化したSOC環境全体で重なり合う人員不足に起因しています。ACM Computing Surveysの調査では、その原因を4つの構造的カテゴリーに分類していますが、IBMの分類法ではこれを6つに拡張しています。以下は、最新のデータに基づいた統合的な見解です。

SIEMプラットフォームは、数百もの情報源からのアラートを集約しますが、多くの場合、適切な相関分析や重複排除が行われていません。エンドポイント検知・対応(EDR)ツールはエンドポイントレベルのアラートを生成しますが、その数は管理対象の端末数に比例して増加します。ツールのうち、データをSIEMに自動的に送信しているのは約59%に過ぎず(Microsoft/Omdia 2026)、残りのデータについてはアナリストが手動で相関分析を行う必要があります。その結果、単一のインシデントがプラットフォーム間で数十件もの個別のアラートを発生させ、それぞれについて個別に調査を行う必要が生じます。

アラート疲労は、セキュリティ侵害の見逃し、数十億ドル規模の優先順位付けコスト、アナリストの燃え尽き症候群を引き起こし、内部脅威の検知において悪用され得る脆弱性を生み出しています。

表:財務面、業務面、人的側面におけるアラート疲労の定量的な影響。

事例研究:ターゲット社への不正アクセス事件(2013年)。FireEyeの検知システムは、 マルウェアを特定したが、アナリストは毎日数千件に及ぶ通知の中に埋もれたこのアラートを見逃してしまった。その結果、4,000万件の決済カード情報が流出する事態となった。これは、アラート疲労がいかにして直接的に情報漏洩の被害規模に直結するかを示す典型的な事例である。

事例:エクイファックス情報漏洩事件(2017年)。CVE-2017-5638に関するパッチ通知が優先順位付けの処理待ちリストに埋もれてしまい、最終的に1億4700万件の記録が流出する事態となった。この失敗は検知段階ではなく、インシデント対応段階に起因するものであり、重要なアラートが日常的な運用ノイズに埋もれてしまったことが原因であった。

内部脅威は、特有の課題をもたらします。内部リスクの主要な兆候である「振る舞い 」に関するアラートは、正当なユーザーの行動が初期段階の内部犯行とよく似ているため、本質的にノイズが多いものです。アナリストが疲労感からこれらのアラートの優先順位を下げると、内部脅威はより長期間にわたり検知されないままになります。 内部リスクによる年間平均コストは1,740万ドル(Ponemon 2025)に上るため、振る舞い 検知するアラートを無視することのリスクは極めて大きい。

高度な攻撃者は、SOCアナリストを圧倒し、実際の侵入活動を隠蔽するために、意図的に大量のアラートを発生させます。この戦術は、 MITRE ATT&CK 防御回避(0005) — 具体的には「防御能力の低下」(T1562). Intezerの2026年の調査 調査によると、深刻度の低いアラートによって実際の脅威の約1%を見逃している企業は、年間で約50件の真の脅威を見逃していることが判明した。この隙を、攻撃者は積極的に悪用している。

アラート疲労を測定するには、誤検知率、調査されなかったアラートの割合、平均トリアージ時間、およびアナリストの離職率を、業界のベンチマークと比較して追跡する必要があります。定量化可能なサイバーセキュリティ指標がなければ、組織はアラート疲労を特定・追跡・報告することができず、予算やツールの変更を正当化することができません。

最も効果的なアプローチは、変更を加える前に現状を把握することから始まります。フォーティネットのSOC指標ガイドで推奨されているように、組織は改善策を実施する前に、少なくとも1回の完全な運用サイクルにわたって現状の指標を収集すべきです。

表:SOC運用におけるアラート疲労の深刻度を測定・追跡するための診断スコアカード。

SOCにおけるアラート疲労の兆候としては、調査されていないアラートの割合の増加、平均トリアージ時間の延長、アラートからインシデントへの転換率の低下、およびアナリストの離職率の上昇などが挙げられます。これらの指標を毎月追跡し、社内の基準値および上記の業界ベンチマークと比較してください。

アラート疲労を軽減するには、ルールの調整や情報の充実から、AIを活用した優先順位付けや振る舞い に至るまで、段階的なアプローチが必要です。

以下の30日・60日・90日のロードマップは、体系的な導入プロセスを示しています。まずはノイズの発生源を解消し、次にデータ強化と相関分析を構築し、最後に戦略的な自動化を導入します。

まず、アラート件数に基づいて、最もアラートが発生しやすい検出ルールを上位10件特定します。ルールごとの誤検知率を測定し、50%を超えるものは無効化するか、ルールを調整します。既知の無害なアクティビティパターンについては、例外リストを導入します。最適化を単発の作業として扱うのではなく、毎月チューニングの見直しを行うようにスケジュールを組んでください。

AIを活用したトリアージ・プラットフォームは、ティア1アラートの調査作業の95%以上を自動化できる(Torq 2026)。AIを積極的に導入している組織では、セキュリティ侵害のライフサイクルを80日短縮し、平均で約190万ドルのコスト削減を実現している(IBM 2025)。セキュリティ担当者の87%が、セキュリティ運用におけるAIの活用を拡大する見込みである(Vectra AI )。

しかし、ガートナーは、AIを導入したSOC(セキュリティオペレーションセンター)が、人員の必要性を自動的に削減するわけではないと指摘している。むしろ、求められるスキル要件を再定義するものである。アラートの優先順位付けの自動化により、アナリストは反復的な作業から解放されるが、組織としては、エスカレーションされたシグナルを調査し、AIモデルを微調整するための経験豊富なオペレーターが依然として必要となる。

アラート疲労により、NIS2、GDPR、CIRCIAの報告期限を過ぎてからようやく侵害が検知されることになり、その結果、規制当局からの罰則や経営幹部の個人責任が生じることになります。主要な競合他社のページには、アラート疲労と規制上のインシデント報告期限との関連性を指摘しているものはありませんが、両者の間には直接的な関連性があります。つまり、優先順位付けの遅れによって検知が遅れると、組織は義務付けられた通知期限を過ぎてしまうのです。

表:アラート疲労が、規制上の報告期限を過ぎてからセキュリティ侵害の検知が遅れる原因となる仕組み。

アラート疲労は、MITRE ATT&CK においても悪用される——具体的には「防御回避(Defense Evasion)」において(0005) から防御能力の低下(T1562) および「マスカレード」(T1036). マッピング コンプライアンス要件 アラート疲労の指標を把握することで、SOCの責任者は投資を正当化する明確な根拠を得ることができます セキュリティフレームワーク および検出精度の向上。

最新のアプローチでは、シグナルファースト型の検知、相関分析を可能にするSOC可視化トライアド・アーキテクチャ、およびすべてのアラートを自律的に調査するエージェント型AIを通じて、アラート疲労に対処しています。

業界は明確な方向性へと収束しつつある。2026年には、エージェント型AI SOCが主流のソリューションパラダイムとなっている。CrowdStrike、Swimlane、Prophet Security、Gurucul、Radiant Securityはいずれも、2026年初頭にエージェント型プラットフォームを発表した。SwimlaneのAI SOCは、ティア1インシデントの解決率99%、MTTR(平均修復時間)51%の短縮を達成したと報告している。 この変化は、アラート中心の検知からシグナル中心の検知への移行であり、抑制ではなく相関分析を通じて検知件数を削減するものです。

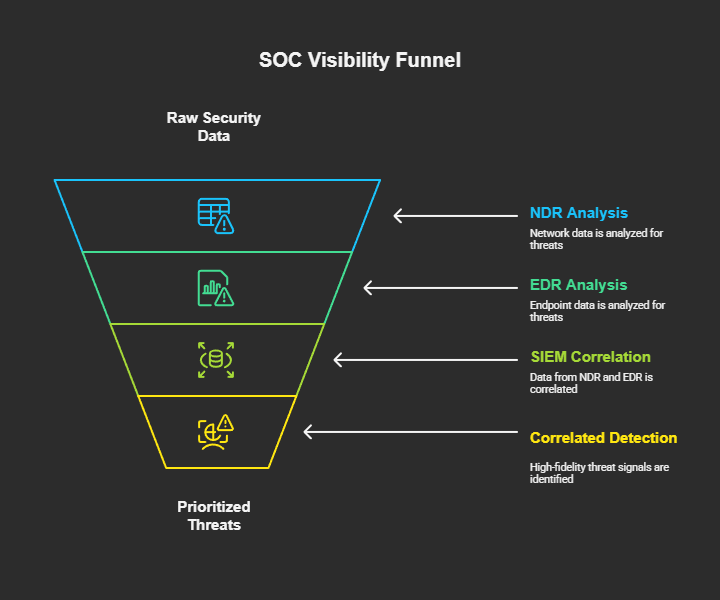

SOCトライアド・アプローチは、SIEM、EDR、およびネットワーク検知・対応機能を統合し、ログ、エンドポイント、ネットワークデータ全体にわたる相関分析による可視性を提供します。各ツールが独立したアラートストリームを生成するのではなく、相関分析による検知機能によって、攻撃対象領域全体にわたる関連するシグナルを結びつけ、攻撃者の行動に基づいて、数千件のアラートを優先順位付けされた少数の脅威シナリオへと変換します。

Vectra AI「侵害を前提とする」という哲学では、アラート疲労を「アラートの量」の問題ではなく、「シグナルの質」の問題として捉えています。Attack Signal Intelligence 、ネットワーク、クラウド、ID、SaaS、IoT/OT環境全体にわたる振る舞い Attack Signal Intelligence 、高精度なシグナルによって真の脅威を特定します。これにより、単にアラートをフィルタリングしたり抑制したりするのではなく、アラートのノイズを最大99%削減します(Globe Telecom)。Vectra AI 検知の現状」レポートでは、統合SOCプラットフォームを通じて提供されるシグナルの明瞭さが、アナリストにすべての検知結果に対して自信を持って対応する力を与える仕組みについて詳述しています。

アラート疲労とは、SOCアナリストが膨大な量のセキュリティアラートにさらされることで生じる感覚の鈍化を指します。アラートの量が人間の処理能力を超えると、アナリストはアラートの優先順位を下げたり、対応を遅らせたり、あるいは本物の脅威を含めてアラートを完全に無視したりするようになります。調査によると、セキュリティアラートの63%は未対応のまま(Vectra AI )、42%は調査されないままとなっています(Microsoft/Omdia 2026)。 この問題は新しいものではありません。医療現場の「アラーム疲労」に端を発するものですが、組織がより多くの検知ツールを導入し、高度化・大量化した攻撃キャンペーンに直面するにつれて、その深刻度は増しています。アラート疲労は、検知までの平均時間(MTTD)、対応までの平均時間(MTTR)、および侵害リスクを直接的に増加させます。

アラーム疲労は医療現場に端を発するもので、病院という環境下で医療機器のアラーム音が絶え間なく鳴り響くことに、臨床スタッフが感覚を麻痺させてしまう現象を指します。 アラート疲労とは、この概念をサイバーセキュリティの分野に応用したもので、SOC環境におけるセキュリティ監視アラートに適用されます。両者には共通の心理的メカニズムがあります。つまり、通知量が過剰になると感覚が鈍り、重要なシグナルを見逃してしまうということです。主な違いは文脈にあります。アラーム疲労は臨床アラートを指すのに対し、アラート疲労はSIEM、EDR、NDR、その他の検知ツールからのサイバーセキュリティアラートを指します。本記事では、サイバーセキュリティの文脈に限定して解説します。

組織が1日あたりに受け取るセキュリティアラートの平均件数は2,992件(Vectra AI )であり、2025年の3,832件、2023年の4,484件から減少している。 従業員数2万人以上の企業では、1日あたり3,000件以上のアラートを受信する可能性がある。平均件数は減少しているものの、問題は依然として残っている。調査されていないアラートの割合は、情報源によって42~63%と極めて高い水準にある。件数の減少は好ましい傾向だが、シグナルの品質と優先順位付けの能力が依然として重大なボトルネックとなっている。

米国では、手動によるアラートの優先順位付けに年間推定33億ドルのコストがかかっています(Vectra AI )。SOC(セキュリティオペレーションセンター)の環境が極めて分散している組織は、ツールを統合している組織に比べて、運用人件費が40%高くなっています(Microsoft/Omdia 2026)。 データ侵害による世界平均のコストは444万ドルに達しており(IBM 2025年)、アラート疲労は侵害の検知遅延の一因となっている。間接的なコストには、アナリストのバーンアウト、離職率の上昇、採用費用、そして経験豊富なアナリストが脅威ハンティングではなく誤検知の対応に時間を費やすことによる機会損失などが含まれる。

AIを活用したトリアージ・プラットフォームは、ティア1アラートの調査を自動化し、アラートの情報補完、相関分析、初期評価を処理することで、手作業の負担を軽減します。AIを積極的に導入している組織では、侵害ライフサイクルを80日短縮し、平均で約190万ドルのコスト削減を実現しています(IBM 2025)。振る舞い モデルは、静的なシグネチャに依存するのではなく、攻撃者の行動パターンを検知することで、根本的なレベルで誤検知率を低減します。 しかし、ガートナーは、AIを導入したSOCは人員需要を自動的に削減するのではなく、求められるスキル要件を再定義すると警告しています。アナリストは、反復的なトリアージ業務から、エスカレーションされた高精度なシグナルの調査へと業務の重点を移しています。

サイバーセキュリティにおけるバーンアウトとは、セキュリティ専門家が、アラート疲労に起因することが多い、長期にわたる高ストレスの労働環境によって経験する、身体的および精神的な消耗を指します。SOCアナリストの63%(Tines 2023)から76%(Sophos 2025)が、バーンアウトを経験していると報告しています。2025年のSANS調査によると、経験年数が5年以下のアナリストの70%が、3年以内に退職していることが判明しました。バーンアウトはアラート疲労を悪化させます。疲労したアナリストはミスを増やし、調査するアラートの数を減らし、退職する可能性が高くなるため、SOCの対応能力をさらに低下させる悪循環を生み出しています。

アラートチューニングとは、検知ルール、閾値、およびアラート設定を最適化し、誤検知を減らしてシグナルの品質を向上させるプロセスです。効果的なチューニングを行うには、まずアラート発生数に基づいてノイズの多い検知ルールを特定し、ルールごとの誤検知率を測定した上で、誤検知率が50%を超えるルールを無効化または調整することから始めます。 また、既知の無害なアクティビティパターンに対する例外リストの実装や、アナリストと検知システム間のフィードバックループの構築も含まれます。これにより、チューニングは単発の最適化ではなく、継続的なプロセスとなります。月次でのチューニングレビューはベストプラクティスです。コンテキストの充実化やリスクベースの優先順位付けと組み合わせることで、アラートチューニングは、新たなツールを追加することなくアラート疲労を軽減する最も迅速な方法の一つとなります。